What 954 Court Filings Tell Us About AI Hallucinations

When lawyers started citing fake cases generated by ChatGPT, the legal world noticed. That was 2023.

Now we have 954 documented instances across 38 countries. Judges, attorneys, legal researchers. All caught submitting fabricated content to courts. Not once or twice. Nearly a thousand times.

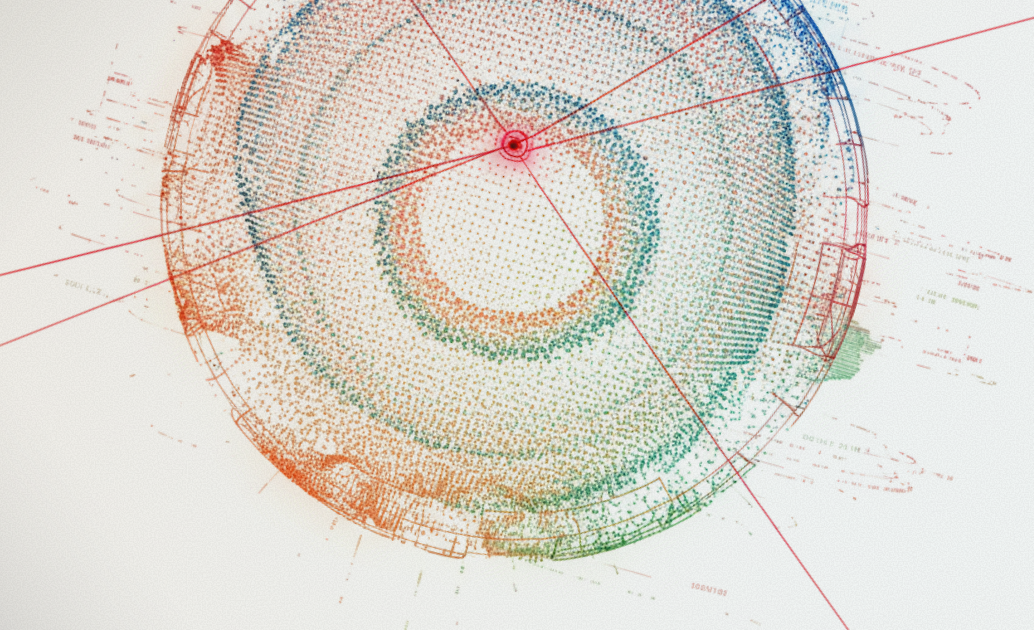

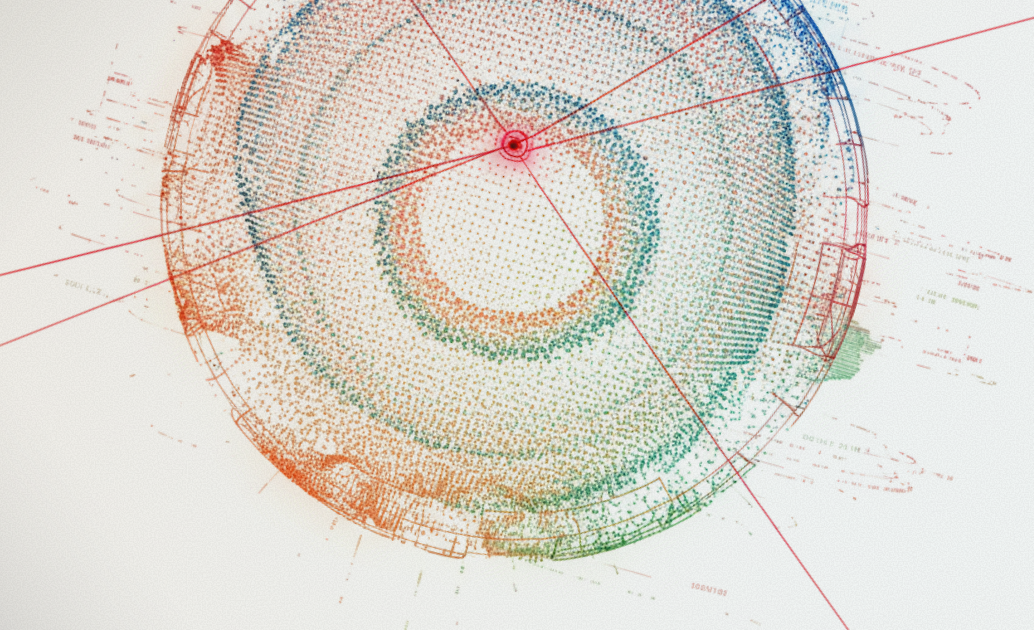

@Damien Charlotin, a researcher at HEC Paris, has been tracking every case where AI-generated hallucinations made it into official court filings. Fabricated citations, false quotes from real cases, misrepresented legal holdings, dangerously outdated advice — all submitted as legitimate legal authority.

These aren't amateurs. These are trained legal professionals who know the stakes. And they're still getting it wrong.

May 2025 alone saw 32 new cases. More than all of 2023 combined. Courts are now issuing sanctions, referring attorneys for discipline, and publishing warnings that "hallucinated cases are no longer aberrations."

Take Mata v. Avianca. Two New York attorneys used ChatGPT to draft a legal brief and submitted it to federal court. The brief cited six cases as precedent. All six were completely fabricated. Fake case names, fake citations, fake legal reasoning. The court sanctioned both attorneys. It became the reference point whenever the topic comes up.

Or the Arizona Social Security appeal where 12 of 19 citations were completely fabricated. The judge called the brief "replete with citation-related deficiencies, including those consistent with artificial intelligence generated hallucinations."

Some fabrications were sophisticated: real judge names, plausible case numbers, legal reasoning that passed a quick read. The AI wasn't guessing randomly. It was confabulating coherently.

Courts caught these errors because the system has adversarial review. Opposing counsel checks citations. Judges verify precedent. Someone eventually notices when a cited case doesn't actually exist.

If this is happening in courtrooms with adversarial review, what's happening in contexts without it?

When an AI system tells a patient that a medical device treats conditions it's not approved for, who's checking? There's no opposing counsel. No verification layer. Just a confident answer that may or may not be true.

ChatGPT now has 800 million weekly active users. Seventy percent of searches end without a click because people get their answers straight from AI. These systems have become the primary way people research products. And the claims they make about your brand are growing every day.

The legal cases show three hallucination patterns that map directly to regulated product claims.

Fabricated facts. In court filings, AI invents case law that never existed. In healthcare, it invents clinical trial results or FDA clearances. In financial services, it fabricates performance data or regulatory approvals.

False attribution. In legal briefs, AI creates fake quotes from real cases. In healthcare, it attributes statements to physicians or studies that never made those claims. In insurance, it misrepresents policy terms or coverage details.

Misrepresented reality. AI gets the case name right but completely distorts the holding. Same thing with regulated products: it describes a real feature accurately, then overstates the indication, capability, or safety profile.

Courts eventually catch these. For most companies, there's no equivalent review process for what AI systems say about their products.

Air Canada is a good example. Their chatbot gave a customer incorrect information about bereavement fares. When the customer relied on it and requested a refund, Air Canada argued the chatbot was "a separate legal entity responsible for its own actions." The tribunal rejected that immediately. The company was liable for what its AI said.

That was a $650 fare dispute. The stakes look different when the claims involve a medical device's approved indications, a drug's efficacy, or a financial product's regulatory status.

"The AI made it up" isn't a legal defense.

The FDA has already issued warning letters for AI-related medical claims. FINRA now requires documentation of AI communications. AI hallucinations cost businesses $67.4 billion in 2024, and companies are facing lawsuits over AI-generated misinformation.

Getting AI systems accurate about your products now is a much easier problem than explaining to regulators later why you didn't.

Interested in learning more about what we're building at Tovio? Get in touch.